#neuroscience

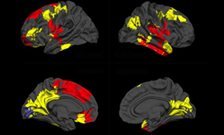

MR images showing a patient with recurrent glioblastoma responding to anti-angiogenic therapy by reduction on abnormal tumor vessel calibers and a change in the direction of the vessel vortex curve estimated from a combined gradient-echo (GE) and spin-echo (SE) MR signal readout. The change from a predominantly counter-clockwise vessel vortex direction at baseline (days -5 and -1) to a predominantly clockwise vessel vortex direction during anti-angiogenic therapy (days 1, 28, 56 and 112) indicates a dramatic transformation in vascular morphology during anti-angiogenic therapy and resulting in increased overall survival. Credit: Kyrre E. Emblem

New MR analysis technique reveals brain tumor response to anti-angiogenesis therapy

A new way of analyzing data acquired in MR imaging appears to be able to identify whether or not tumors are responding to anti-angiogenesis therapy, information that can help physicians determine the most appropriate treatments and discontinue ones that are ineffective. In their report receiving online publication in Nature Medicine, investigators from the Martinos Center for Biomedical Imaging at Massachusetts General Hospital (MGH), describe how their technique, called vessel architectural imaging (VAI), was able to identify changes in brain tumor blood vessels within days of the initiation of anti-angiogenesis therapy.

“Until now the only ways of obtaining similar data on the blood vessels in patients’ tumors were either taking a biopsy, which is a surgical procedure that can harm the patients and often cannot be repeated, or PET scanning, which provides limited information and exposes patients to a dose of radiation,” says Kyrre Emblem, PhD, of the Martinos Center, lead and corresponding author of the report. “VAI can acquire all of this information in a single MR exam that takes less than two minutes and can be safely repeated many times.”

Previous studies in animals and in human patients have shown that the ability of anti-angiogenesis drugs to improve survival in cancer therapy stems from their ability to “normalize” the abnormal, leaky blood vessels that usually develop in a tumor, improving the perfusion of blood throughout a tumor and the effectiveness of chemotherapy and radiation. In the deadly brain tumor glioblastoma, MGH investigators found that anti-angiogenesis treatment alone significantly extends the survival of some patients by reducing edema, the swelling of brain tissue. In the current report, the MGH team uses VAI to investigate how these drugs produce their effects and which patients benefit.

Advanced MR techniques developed in recent years can determine factors like the size, radius and capacity of blood vessels. VAI combines information from two types of advanced MR images and analyzes them in a way that distinguishes among small arteries, veins and capillaries; determines the radius of these vessels and shows how much oxygen is being delivered to tissues. The MGH team used VAI to analyze MR data acquired in a phase 2 clinical trial – led by Tracy Batchelor, MD, director of Pappas Center for Neuro-Oncology at MGH and a co-author of the current paper – of the anti-angiogenesis drug cediranib in patients with recurrent glioblastoma. The images had been taken before treatment started and then 1, 28, 56, and 112 days after it was initiated.

In some patients, VAI identified changes reflecting vascular normalization within the tumors – particularly changes in the shape of blood vessels – after 28 days of cediranib therapy and sometimes as early as the next day. Of the 30 patients whose data was analyzed, VAI indicated that 10 were true responders to cediranib, whereas 12 who had a worsening of disease were characterized as non-responders. Data from the remaining 8 patients suggested stabilization of their tumors. Responding patients ended up surviving six months longer than non-responders, a significant difference for patients with an expected survival of less than two years, Emblem notes. He adds that quickly identifying those whose tumors don’t respond would allow discontinuation of the ineffective therapy and exploration of other options.

Gregory Sorensen, MD, senior author of the Nature Medicine report, explains, “One of the biggest problems in cancer today is that we do not know who will benefit from a particular drug. Since only about half the patients who receive a typical anti-cancer drug benefit and the others just suffer side effects, knowing whether or not a patient’s tumor is responding to a drug can bring us one step closer to truly personalized medicine – tailoring therapies to the patients who will benefit and not wasting time and resources on treatments that will be ineffective.” Formerly with the Martinos Center, Sorensen is now with Siemens Healthcare.

Study co-author Rakesh Jain, PhD, director of the Steele Laboratory in the MGH Department of Radiation Oncology, adds, “This is the most compelling evidence yet of vascular normalization with anti-angiogenic therapy in cancer patients and how this concept can be used to select patients likely to benefit from these therapies.”

Lead author Emblem notes that VAI may help further improve understanding of how abnormal tumor blood vessels change during anti-angiogenesis treatment and could be useful in the treatment of other types of cancer and in vascular conditions like stroke. He and his colleagues are also exploring whether VAI can identify which glioblastoma patients are likely to respond to anti-angiogenesis drugs even before therapy is initiated, potentially eliminating treatment destined to be ineffective. A postdoctoral research fellow at the Martinos Center at the time of the study, Emblem is now a principal investigator at Oslo University Hospital in Norway and maintains an affiliation with the Martinos Center.

Post link

Researchers Gain Insight into How Ion Channels Control Heart and Brain Electrical Activity

Virginia Commonwealth University researchers studying a special class of potassium channels known as GIRKs, which serve important functions in heart and brain tissue, have revealed how they become activated to control cellular excitability.

The findings advance the understanding of the interaction between a family of signaling proteins called G proteins, and a special type of cell membrane ion pore called G protein-sensitive, inwardly rectifying potassium (GIRK) channels. The findings may one day help researchers develop targeted drugs to treat conditions of the heart such as atrial fibrillation.

In the study, published this week in the Online First section of Science Signaling, a publication of the American Association for the Advancement of Science (AAAS), researchers used a computational approach to predict the interactions between G proteins and a GIRK channel.

Rahul Mahajan, a M.D./Ph.D. candidate in the VCU School of Medicine’s Department of Physiology and Biophysics, undertook this problem for his dissertation work, under the mentorship of Diomedes E. Logothetis, Ph.D., chair of the Department of Physiology and Biophysics and the John D. Bower Endowed Chair in Physiology in the VCU School of Medicine. They developed a model and tested its predictions in cells, demonstrating how G proteins cause activation of GIRKs.

“Malfunctions of GIRK channels have been implicated in chronic atrial fibrillation, as well as in drug abuse and addiction,” said Logothetis, who is an internationally recognized leader in the study of ion channels and cell signaling mechanisms.

“Understanding the structural mechanism of Gβγ activation of GIRK channels could lead to rational based drug design efforts to combat chronic atrial fibrillation.”

In chronic atrial fibrillation, the GIRK channel is believed to be inappropriately open. According to Logothetis, if researchers are able to target only the specific site that keeps the channel inappropriately open, then any unrelated channels could be left unaltered, thus avoiding unwanted side effects.

Crystal structures of GIRK channels, which preceded the current study, have revealed two constrictions of the ion permeation pathway that researchers call “gates”: one at the inner leaflet of the membrane bilayer and the other close by in the cytosol, which is the liquid found inside cells.

“The structure of the Gβγ -GIRK1 complex reveals that Gβγ inserts a part of it in a cleft formed by two cytosolic loops of two adjacent channel subunits,” Logothetis said. “This is also the place where alcohols bind to activate the channel. One can think of this cleft as a clam that has its shells either open or shut closed. Stabilization of this cleft in the ‘open’ position stabilizes the cytosolic gate in the open state.”

GIRKs are activated when they interact with G proteins coupled to receptors bound to stimulatory hormones or neurotransmitters. In heart tissue, acetylcholine released by the vagus nerve activates these channels, which hyperpolarize the membrane potential and slow heart rate. In brain tissue, GIRKs inhibit excitation by acting at postsynaptic cells.

G proteins are composed of three subunits, a, b, and g. Since 1987, researchers have known that the Gbgsubunits directly activate the atrial GIRK channel, but an atomic resolution picture of how the two proteins interact remained elusive until now.

Moving forward, the team would like to use computational and experimental approaches to build and test the structures of the rest of the components of the G protein complex – for example, the Ga subunits and the G protein-coupled receptor – around the Gβγ-channel complex, which is the structure the team has already achieved.

Post link

8-Year-Old Never Ages, Could Reveal ‘Biological Immortality’

Gabby Williams has the facial features and skin of a newborn, and she is just as dependent. Her mother feeds, diapers and cradles her tiny frame as she did the day she was born.

The little girl from Billings, Mont., is 8 years old, but weighs only 11 pounds. Gabby has a mysterious condition, shared by only a handful of others in the world, that slows her rate of aging.

For the past two years, a doctor who has been trying to find the genetic off-switch to stop the aging process has been studying Gabby, as well as two other people who have striking similarities.

Why the 'Benjamin Button’ children never age.

A 29-year-old Florida man has the body of a 10-year-old, and a 31-year-old Brazilian woman is the size of a 2-year-old. Like Gabby, neither seems to grow older.

Unraveling what these three people may have in common is the subject of a TLC television special, “40-Year-Old Child: A New Case,” which airs Monday, Aug. 19, at 10 p.m. ET. The show is a follow-up to Gabby’s story, which aired last year.

“In some people, something happens to them and the development process is retarded,” said medical researcher Richard F. Walker. “The rate of change in the body slows and is negligible.”

16-year-old is the size of a toddler.

Walker is retired from the University of Florida Medical School and now does his research at All Children’s Hospital in St. Petersburg.

“My whole career has been focused on the aging process,” he told ABCNews.com. “My fixation has been not on the consequences but the cause of it.”

Not only do the people he’s studying have a growth rate of one-fifth the speed of others, but they live with a variety of other medical problems, including deafness, the inability to walk, eat or even speak.

“Gabrielle hasn’t changed since pretty much forever,” said her mother, Mary Margret Williams, 38. “She has gotten a little longer and we have jumped into putting her in size 3-6 month clothes instead of 0-3 months for the footies.

"Last time we weighed her she was up a pound to 11 pounds and she’s gotten a few more haircuts,” she told ABCNews.com. “Other than that, she hasn’t changed much since the [2012] show.”

Williams, who works part-time at a dermatologist’s office, and her husband, a corrections officer for the state, share the child care responsibilities for their perpetual infant.

Walker explains that physiological change, or what he calls “developmental inertia,” is essential for human growth. Maturation occurs after reproduction.

“Without that process we never develop,” he said. “When we develop, all the pieces of our body come together and change and are coordinated. Otherwise, there would be chaos.”

But, said Walker, the body does not have a “stop switch” for this development. “What happens is we become mature at age 20 and continue to change.”

The first subtle internal body changes of aging are seen in the 30s and become more visible in the 40s.

“There is a progressive erosion of internal order as a result of developmental inertia,” he said.

In one of the girls Walker has studied, he found damage to one of the genes that causes developmental inertia, a finding that he said is significant. He also suspects the mutations are on the regulatory genes on the second female X chromosome.

“If we could identify the gene and then at young adulthood we could silence the expression of developmental inertia, find an off-switch, when you do that, there is perfect homeostasis and you are biologically immortal.”

Now Walker doesn’t mean that people will never die. Disease and accidents will still end human life.

“But you wouldn’t have the later years – you’d remain physically and functionally able,” he said.

That is why he believes his study of Gabby Williams’ genetic code is so important. “She fits the model,” said Walker.

“We’ve been on this journey to find out, are my other children at any risk in having a child like Gabrielle,” said Williams, who has five other children between the ages of 1 and 10.

“We did find out with Dr. Walker when he did the [gene] sequencing that it’s not something we can pass on but just an abnormality, a mutated gene that was just happenstance,” she said. “That was a relief for us.”

At first, when the Williams family members found out about Walker’s research, they hesitated to become guinea pigs in the studies that would promote a so-called “fountain of youth.”

“There was some concern,” she said. “We are good Catholics, God-fearing people and we believe we are meant to get old – the process of life – and meant to die. It was scary to think about, and we did not want to be part of it.”

But as they talked further with Walker, the family realized that his research was designed to help people struggling with the impairments of old age.

“Alzheimer’s is one of the scariest diseases out there,” said Williams. “If what Gabrielle holds inside of her would find a cure – for sure we would be a part of the research project. We have faith that Dr. Walker and the scientific community do find something focused more on the disease of aging, rather than making you 35 for the rest of your life.”

As for Gabby’s life span, her doctors cannot say what that will look like.

“From the time of her birth, we didn’t think she would be with us very long,” said her mother. “The fact is she is now going on 9 years. She kind of surpassed my expectations from the get go.

"It’s not something I worry about,” said Williams, who said she trusts that God has a plan for her infantile daughter.

“When he is ready to take her back, it will be sad,” she said. “But what a glorious thing it will be for Gabby to go to heaven one day. I know it will happen, but I am not hoping it’s any day soon.”

Post link

Continuously eating fatty foods perturbs communication between the gut and brain, which in turn perpetuates a bad diet.

A chronic high-fat diet is thought to desensitize the brain to the feeling of satisfaction that one normally gets from a meal, causing a person to overeat in order to achieve the same high again. New research published today (August 15) in Science,however, suggests that this desensitization actually begins in the gut itself, where production of a satiety factor, which normally tells the brain to stop eating, becomes dialed down by the repeated intake of high-fat food.

“It’s really fantastic work,” said Paul Kenny, a professor of molecular therapeutics at The Scripps Research Institute in Jupiter, Florida, who was not involved in the study. “It could be a so-called missing link between gut and brain signaling, which has been something of a mystery.”

While pork belly, ice cream, and other high-fat foods produce an endorphin response in the brain when they hit the taste buds, according to Kenny, the gut also sends signals directly to the brain to control our feeding behavior. Indeed, mice nourished via gastric feeding tubes, which bypass the mouth, exhibit a surge in dopamine—a neurotransmitter promoting reinforcement in the brain’s reward circuitry—similar to that experienced by those eating normally.

This dopamine surge occurs in response to feeding in both mice and humans. But evidence suggests that dopamine signaling in the brain is deficient in obese people. Ivan de Araujo, a professor of psychiatry at the Yale School of Medicine, has now discovered that obese mice on a chronic high-fat diet also have a muted dopamine response when receiving fatty food via a direct tube to their stomachs.

To determine the nature of the dopamine-regulating signal emanating from the gut, Araujo and his team searched for possible candidates. “When you look at animals chronically exposed to high-fat foods, you see high levels of almost every circulating factor—leptin, insulin, triglycerides, glucose, et cetera,” he said. But one class of signaling molecule is suppressed. Of these, Araujo’s primary candidate was oleoylethanolamide. Not only is the factor produced by intestinal cells in response to food, he said, but during chronic high-fat exposure, “the suppression levels seemed to somehow match the suppression that we saw in dopamine release.”

Araujo confirmed oleoylethanol’s dopamine-regulating ability in mice by administering the factor via a catheter to the tissues surrounding their guts. “We discovered that by restoring the baseline level of [oleoylethanolamide] in the gut … the high-fat fed animals started having dopamine responses that were indistinguishable from their lean counterparts.”

The team also found that oleoylethanolamide’s effect on dopamine was transmitted via the vagus nerve, which runs between the brain and abdomen, and was dependent on its interaction with a transcription factor called PPAR-a.

Oleoylethanolamide levels are also reduced in fasting animals and increase in response to eating, communicating with the brain to stop further consumption once the belly is full. Indeed, oleoylethanolamide is a known satiety factor. Therefore, when chronic consumption of high-fat food diminishes its production, the satisfaction signal is not achieved, and the brain is essentially “blind to the presence of calories in the gut,” said Araujo, and thus demands more food.

It is not clear why a chronic high-fat diet suppresses the production of oleoylethanolamide. But once the vicious cycle starts, it is hard to break because the brain is receiving its information subconsciously, said Daniele Piomelli, a professor at the University of California, Irvine, and director of drug discovery and development at the Italian Institute of Technology in Genoa.

“We eat what we like, and we think we are conscious of what we like, but I think what this [paper] and others are indicating is that there is a deeper, darker side to liking—a side that we’re not aware of,” Piomelli said. “Because it is an innate drive, you can not control it.” Put another way, even if you could trick your taste buds into enjoying low-fat yogurt, you’re unlikely to trick your gut.

The good news, however, is that “there is no permanent impairment in the [animals’] dopamine levels,” Araujo said. This suggests that if drugs could be designed to regulate the oleoylethanolamide–to-PPAR-a pathway in the gut, Kenny added, it could have “a huge impact on people’s ability to control their appetite.”

“It was like red-hot pokers needling one side of my face,” says Catherine, recalling the cluster headaches she experienced for six years. “I just wanted it to stop.” But it wouldn’t – none of the drugs she tried had any effect.

Thinking she had nothing to lose, last year she enrolled in a pilot study to test a handheld device that applies a bolt of electricity to the neck, stimulating the vagus nerve – the superhighway that connects the brain to many of the body’s organs, including the heart.

The results of the trial were presented last month at the International Headache Congress in Boston, and while the trial is small, the findings are positive. Of the 21 volunteers, 18 reported a reduction in the severity and frequency of their headaches, rating them, on average, 50 per cent less painful after using the device daily and whenever they felt a headache coming on.

This isn’t the first time vagal nerve stimulation has been used as a treatment – but it is one of the first that hasn’t required surgery. Some people with epilepsy have had a small generator that sends regular electrical signals to the vagus nerve implanted into their chest. Implanted devices have also been approved to treat depression. What’s more, there is increasing evidence that such stimulation could treat many more disorders from headaches to stroke and possibly Alzheimer’s disease.

The latest study suggests it is possible to stimulate the nerve through the skin, rather than resorting to surgery. “What we’ve done is figured out a way to stimulate the vagus nerve with a very similar signal, but non-invasively through the neck,” says Bruce Simon, vice-president of research at New Jersey-based ElectroCore, makers of the handheld device. “It’s a simpler, less invasive way to stimulate the nerve.”

Cluster headaches are thought to be triggered by the overactivation of brain cells involved in pain processing. The neurotransmitter glutamate, which excites brain cells, is a prime suspect. ElectroCore turned to the vagus nerve as previous studies had shown that stimulating it in people with epilepsy releases neurotransmitters that dampen brain activity.

When the firm used a smaller version of ElectroCore’s device on rats, it found it reduced glutamate levels and excitability in these pain centres. Other studies have shown that vagus nerve stimulation causes the release of inhibitory neurotransmitters which counter the effects of glutamate.

The big question is whether a non-implantable device can really trigger changes in brain chemistry in humans, or whether people are simply experiencing a placebo effect. “The vagus nerve is buried deep in the neck, and something that’s delivering currents through the skin can only go so deep,” says Mike Kilgard of the University of Texas at Dallas. As you turn up the voltage, there’s a risk of it activating muscle fibres that trigger painful cramps, he adds.

Simon says that volunteers using the device haven’t reported any serious side effects. He adds that ElectroCore will soon publish data showing changes in brain activity in humans after using the device. Placebo-controlled trials are also about to start.

Catherine has been using it for a year without ill effect. “I can now function properly as a human being again,” she says.

The many uses of the wonder nerve

Coma, irritable bowel syndrome, asthma and obesity are just some of the disparate conditions that vagus nerve stimulation may benefit and for which human trials are under way.

It might also help people with tinnitus. Although people with tinnitus complain of ringing in their ears, the problem actually arises because too many neurons fire in the auditory part of the brain when certain frequencies are heard.

Mike Kilgard of the University of Texas at Dallas reasoned that if people were played tones that didn’t trigger tinnitus while the vagus nerve was stimulated, this might coax the rogue neurons into firing in response to these frequencies instead. “By activating this nerve we can enhance the brain’s ability to rewire itself,” he says.

He has so far tested the method in rats and in 10 people with tinnitus, using an implanted device to stimulate the nerve. Not everyone noticed an improvement, but even so Kilgard is planning a larger trial. The work was presented at a meeting of the International Union of Physiological Sciences in Birmingham, UK, last month. The technique is also being tested in people who have had a stroke.

“If these studies stand up it could be worth changing the name of the vagus nerve to the wonder nerve,” says Sunny Ogbonnaya at Cork University Hospital in Ireland.

Neuroscientists often use electroencephalography (EEG) as an inexpensive way to record electrical signals in the brain. Though it would be useful to run these recordings for long periods of time, that usually isn’t practical: EEG recording traditionally involves attaching many electrodes and cables to a patient’s scalp.

Now engineers at Imperial College in London have developed an EEG device that can be worn inside the ear, like a hearing aid. They say the device will allow scientists to record EEGs for several days at a time; this would allow doctors to monitor patients who have regularly recurring problems like seizures or microsleep.

“The ideal is to have a very stable recording system, and recordings which are repeatable,” explains co-creator Danilo Mandic. “It’s not interfering with your normal life, because there are acoustic vents so people can hear. After a while, they forget they’re having an EEG.”

By nestling the EEG inside the ear, the engineers avoid a lot of signal noise usually introduced by body movement. They can also ensure that the electrodes are always placed in exactly the same spot, which, they say, will make repeated readings more reliable.

Since the device attaches to just one area, it can record only from the temporal region. This limits its potential applications to events that involve local activity. Tzzy-Ping Jung, co-director of the University of California, San Diego’s Center for Advanced Neurological Engineering, says that this does not mean the device will not be valuable.

“Different modalities will have different applications. I would not rule out the usefulness of any modalities,” says Jung. “I think it’s a very good idea with very promising results.”

From frogs to humans, selecting a mate is complicated. Females of many species judge suitors based on many indicators of health or parenting potential. But it can be difficult for males to produce multiple signals that demonstrate these qualities simultaneously.

In a study of gray tree frogs, a team of University of Minnesota researchers discovered that females prefer males whose calls reflect the ability to multitask effectively. In this species (Hyla chrysoscelis) males produce “trilled” mating calls that consist of a string of pulses.

Typical calls can range in duration from 20-40 pulses per call and occur between 5-15 calls per minute. Males face a trade-off between call duration and call rate, but females preferred calls that are longer andmore frequent, which is no simple task.

The findings were published in August issue of Animal Behavior.

“It’s kind of like singing and dancing at the same time,” says Jessica Ward, a postdoctoral researcher who is lead author for the study. Ward works in the laboratory of Mark Bee, a professor in the College of Biological Sciences’ Department of Ecology, Evolution and Behavior.

The study supports the multitasking hypothesis, which suggests that females prefer males who can do two or more hard-to-do things at the same time because these are especially good quality males, Ward says. The hypothesis, which explores how multiple signals produced by males influence female behavior, is a new area of interest in animal behavior research.

By listening to recordings of 1,000 calls, Ward and colleagues learned that males are indeed forced to trade off call duration and call rate. That is, males that produce relatively longer calls only do so at relatively slower rates.

“It’s easy to imagine that we humans might also prefer multitasking partners, such as someone who can successfully earn a good income, cook dinner, manage the finances and get the kids to soccer practice on time.”

The study was carried out in connection with Bee’s research goal, which is understanding how female frogs are able to distinguish individual mating calls from a large chorus of males. By comparison, humans, especially as we age, lose the ability to distinguish individual voices in a crowd. This phenomenon, called the “cocktail party” problem, is often the first sign of a diminishing ability to hear. Understanding how frogs hear could lead to improved hearing aids.

Autistic kids who best peers at math show different brain organization

Children with autism and average IQs consistently demonstrated superior math skills compared with nonautistic children in the same IQ range, according to a study by researchers at the Stanford University School of Medicine and Lucile Packard Children’s Hospital.

“There appears to be a unique pattern of brain organization that underlies superior problem-solving abilities in children with autism,” said Vinod Menon, PhD, professor of psychiatry and behavioral sciences and a member of the Child Health Research Institute at Packard Children’s.

The autistic children’s enhanced math abilities were tied to patterns of activation in a particular area of their brains — an area normally associated with recognizing faces and visual objects.

Menon is senior author of the study, published online Aug. 17 in Biological Psychiatry. Postdoctoral scholar Teresa luculano, PhD, is the lead author.

Children with autism have difficulty with social interactions, especially interpreting nonverbal cues in face-to-face conversations. They often engage in repetitive behaviors and have a restricted range of interests.

But in addition to such deficits, children with autism sometimes exhibit exceptional skills or talents, known as savant abilities. For example, some can instantly recall the day of the week of any calendar date within a particular range of years — for example, that May 21, 1982, was a Friday. And some display superior mathematical skills.

“Remembering calendar dates is probably not going to help you with academic and professional success,” Menon said. “But being able to solve numerical problems and developing good mathematical skills could make a big difference in the life of a child with autism.”

The idea that people with autism could employ such skills in jobs, and get satisfaction from doing so, has been gaining ground in recent years.

The participants in the study were 36 children, ages 7 to 12. Half had been diagnosed with autism. The other half was the control group. Each group had 14 boys and four girls. (Autism disproportionately affects boys.) All participants had IQs in the normal range and showed normal verbal and reading skills on standardized tests administered as part of the recruitment process for the study. But on the standardized math tests that were administered, the children with autism outperformed children in the control group.

After the math test, researchers interviewed the children to assess which types of problem-solving strategies each had used: Simply remembering an answer they already knew; counting on their fingers or in their heads; or breaking the problem down into components — a comparatively sophisticated method called decomposition. The children with autism displayed greater use of decomposition strategies, suggesting that more analytic strategies, rather than rote memory, were the source of their enhanced abilities.

Then, the children worked on solving math problems while their brain activity was measured in an MRI scanner, in which they had to lie down and remain still. The brain scans of the autistic children revealed an unusual pattern of activity in the ventral temporal occipital cortex, an area specialized for processing visual objects, including faces.

“Our findings suggest that altered patterns of brain organization in areas typically devoted to face processing may underlie the ability of children with autism to develop specialized skills in numerical problem solving,” Iuculano said.

“These findings not only empirically confirm that high-functioning children with autism have especially strong number-problem-solving abilities, but show that this cognitive strength in math is based on different patterns of functional brain organization,” said Carl Feinstein, MD, director of the Center for Autism and Related Disorders at Packard Children’s and professor of psychiatry and behavioral sciences at the School of Medicine. He was not involved in the study.

Menon added that previous research “has focused almost exclusively on weaknesses in children with autism. Our study supports the idea that the atypical brain development in autism can lead, not just to deficits, but also to some remarkable cognitive strengths. We think this can be reassuring to parents.”

The research team is now gathering data from a larger group of children with autism to learn more about individual differences in their mathematical abilities. Menon emphasized that not all children with autism have superior math abilities, and that understanding the neural basis of variations in problem-solving abilities is an important topic for future research.

(Image: Corbis)

Post link

Remembering to Remember Supported by Two Distinct Brain Processes

You plan on shopping for groceries later and you tell yourself that you have to remember to take the grocery bags with you when you leave the house. Lo and behold, you reach the check-out counter and you realize you’ve forgotten the bags.

Remembering to remember — whether it’s grocery bags, appointments, or taking medications — is essential to our everyday lives. New research sheds light on two distinct brain processes that underlie this type of memory, known as prospective memory.

The research is published in Psychological Science, a journal of the Association for Psychological Science.

To investigate how prospective memory is processed in the brain, psychological scientist Mark McDaniel of Washington University in St. Louis and colleagues had participants lie in an fMRI scanner and asked them to press one of two buttons to indicate whether a word that popped up on a screen was a member of a designated category. In addition to this ongoing activity, participants were asked to try to remember to press a third button whenever a special target popped up. The task was designed to tap into participants’ prospective memory, or their ability to remember to take certain actions in response to specific future events.

When McDaniel and colleagues analyzed the fMRI data, they observed that two distinct brain activation patterns emerged when participants made the correct button press for a special target.

When the special target was not relevant to the ongoing activity — such as a syllable like “tor” — participants seemed to rely on top-down brain processes supported by the prefrontal cortex. In order to answer correctly when the special syllable flashed up on the screen, the participants had to sustain their attention and monitor for the special syllable throughout the entire task. In the grocery bag scenario, this would be like remembering to bring the grocery bags by constantly reminding yourself that you can’t forget them.

When the special target was integral to the ongoing activity—such as a whole word, like “table” — participants recruited a different set of brain regions, and they didn’t show sustained activation in these regions. The findings suggest that remembering what to do when the special target was a whole word didn’t require the same type of top-down monitoring. Instead, the target word seemed to act as an environmental cue that prompted participants to make the appropriate response – like reminding yourself to bring the grocery bags by leaving them near the front door.

“These findings suggest that people could make use of several different strategies to accomplish prospective memory tasks,” says McDaniel.

McDaniel and colleagues are continuing their research on prospective memory, examining how this phenomenon might change with age.

(Image: Shutterstock)

Post link

A new study in Biological Psychiatry explores the influence of oxytocin

Difficulty in registering and responding to the facial expressions of other people is a hallmark of autism spectrum disorder (ASD). Relatedly, functional imaging studies have shown that individuals with ASD display altered brain activations when processing facial images.

The hormone oxytocin plays a vital role in the social interactions of both animals and humans. In fact, multiple studies conducted with healthy volunteers have provided evidence for beneficial effects of oxytocin in terms of increased trust, improved emotion recognition, and preference for social stimuli.

This combination of scientific work led German researchers to hypothesize about the influence of oxytocin in ASD. Dr. Gregor Domes, from the University of Freiburg and first author of the new study, explained: “In the present study, we were interested in the question of whether a single dose of oxytocin would change brain responses to social compared to non-social stimuli in individuals with autism spectrum disorder.”

They found that oxytocin did show an effect on social processing in the individuals with ASD, “suggesting that oxytocin may help to treat a basic brain function that goes awry in autism spectrum disorders,” commented Dr. John Krystal, Editor of Biological Psychiatry.

To conduct this study, they recruited fourteen individuals with ASD and fourteen control volunteers, all of whom completed a face- and house-matching task while undergoing imaging scans. Each participant completed this task and scanning procedure twice, once after receiving a nasal spray containing oxytocin and once after receiving a nasal spray containing placebo. The order of the sprays was randomized, and the tests were administered one week apart.

Using two sets of stimuli in the matching task, one of faces and one of houses, allowed the researchers to not only compare the effects of the oxytocin and placebo administrations, but also allowed them to discriminate findings between specific effects to only social stimuli and non-specific effects to more general brain processing.

What they found was intriguing. The data indicate that oxytocin specifically increases responses of the amygdala to social stimuli in individuals with ASD. The amygdala, the authors explain, “has been associated with processing of emotional stimuli, threat-related stimuli, face processing, and vigilance for salient stimuli”.

This finding suggests oxytocin might promote the salience of social stimuli in ASD. Increased salience of social stimuli might support behavioral training of social skills in ASD.

These data support the idea that oxytocin may be a promising approach in the treatment of ASD and could stimulate further research, even clinical trials, on the exploration of oxytocin as an add-on treatment for individuals with autism spectrum disorder.

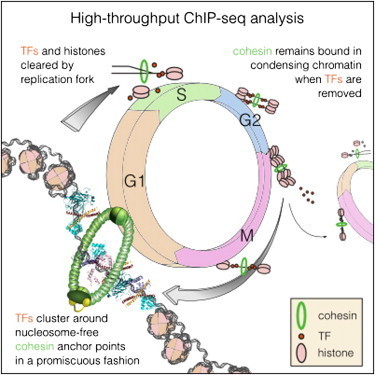

The cells in our bodies can divide as often as once every 24 hours, creating a new, identical copy. DNA binding proteins called transcription factors are required for maintaining cell identity. They ensure that daughter cells have the same function as their mother cell, so that for example muscle cells can contract or pancreatic cells can produce insulin. However, each time a cell divides the specific binding pattern of the transcription factors is erased and has to be restored in both mother and daughter cells. Previously it was unknown how this process works, but now scientists at Karolinska Institutet have discovered the importance of particular protein rings encircling the DNA and how these function as the cell’s memory.

The DNA in human cells is translated into a multitude of proteins required for a cell to function. When, where and how proteins are expressed is determined by regulatory DNA sequences and a group of proteins, known as transcription factors, that bind to these DNA sequences. Each cell type can be distinguished based on its transcription factors, and a cell can in certain cases be directly converted from one type to another, simply by changing the expression of one or more transcription factors. It is critical that the pattern of transcription factor binding in the genome be maintained. During each cell division, the transcription factors are removed from DNA and must find their way back to the right spot after the cell has divided. Despite many years of intense research, no general mechanism has been discovered which would explain how this is achieved.

“The problem is that there is so much DNA in a cell that it would be impossible for the transcription factors to find their way back within a reasonable time frame. But now we have found a possible mechanism for how this cellular memory works, and how it helps the cell remember the order that existed before the cell divided, helping the transcription factors find their correct places”, explains Jussi Taipale, professor at Karolinska Institutet and the University of Helsinki, and head of the research team behind the discovery.

The results are now being published in the scientific journal Cell. The research group has produced the most complete map yet of transcription factors in a cell. They found that a large protein complex called cohesin is positioned as a ring around the two DNA strands that are formed when a cell divides, marking virtually all the places on the DNA where transcription factors were bound. Cohesin encircles the DNA strand as a ring does around a piece of string, and the protein complexes that replicate DNA can pass through the ring without displacing it. Since the two new DNA strands are caught in the ring, only one cohesin is needed to mark the two, thereby helping the transcription factors to find their original binding region on both DNA strands.

“More research is needed before we can be sure, but so far all experiments support our model,” says Martin Enge, assistant professor at Karolinska Institutet.

Transcription factors play a pivotal role in many illnesses, including cancer as well as many hereditary diseases. The discovery that virtually all regulatory DNA sequences bind to cohesin may also end up having more direct consequences for patients with cancer or hereditary diseases. Cohesin would function as an indicator of which DNA sequences might contain disease-causing mutations.

“Currently we analyse DNA sequences that are directly located in genes, which constitute about three per cent of the genome. However, most mutations that have been shown to cause cancer are located outside of genes. We cannot analyse these in a reliable manner - the genome is simply too large. By only analysing DNA sequences that bind to cohesin, roughly one per cent of the genome, it would allow us to analyse an individual’s mutations and make it much easier to conduct studies to identify novel harmful mutations,” Martin Enge concludes.

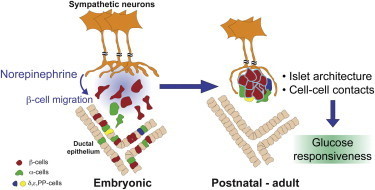

The human body is a complicated system of blood vessels, nerves, organs, tissue and cells each with a specific job to do. When all are working together, it’s a symphony of form and function as each instrument plays its intended roles.

Biologist Rejji Kuruvilla and her fellow researchers uncovered what happens when one instrument is not playing its part.

Kuruvilla along with graduate students Philip Borden and Jessica Houtz, both from the Biology Department at Johns Hopkins University’s Krieger School of Arts and Sciences, and Dr. Steven Leach from the McKusick-Nathans Institute of Genetic Medicine at the Johns Hopkins School of Medicine, recently published a paper in the journal Cell Reports exploring whether “cross-talk” or reciprocal signaling, takes place between the neurons in the sympathetic nervous system and the tissues that the nerves connect to. In this case the targeted tissue called islets, were in the pancreas.

“We knew that sympathetic neurons need molecular signals from the tissues that they connect with, to grow and survive,” said Kuruvilla. “What we did not know was whether the neurons would reciprocally signal to the target tissues to instruct them to grow and mature. It made sense to focus on the pancreas because of previous studies done in diabetic animal models where sympathetic nerves within the pancreas were found to retract early on in the disease, suggesting that dysfunction of the nerves could be an early trigger for pancreatic defects.”

The researchers spent approximately three years working with lab mice to test the various scenarios in which signaling between sympathetic neurons and islet cells might take place. The experiments focused on what effects removing the sympathetic nerves would have on pancreas development in newborn mice.

Previous studies had shown that pancreatic cells release a signal of their own, a nerve growth protein, that directs the sympathetic nerves toward the pancreas and provides necessary nutrition to sustain the nerves.

In turn, Kuruvilla’s team found that in mutant mice, the removal of the sympathetic neurons resulted in deformities in the architecture of the pancreatic islet cells and defects in insulin secretion and glucose metabolism.

Pancreatic islets are highly organized functional micro-organs with a defined size, shape and distinctive arrangement of endocrine cells. It’s this marriage of form and function that result in cells clustered close together, that creates greater, more efficient islet cell function.

However, the mutant mice, with their sympathetic neurons removed, had islet formations that were misshapen, sported lesions and developed in a patchy, uneven manner. Because of their dysfunctional islet cell development, postnatal mice did not secrete enough insulin when confronted with high glucose, and had high blood glucose levels as a result. Increased levels of blood glucose in humans is a hallmark of diabetes.

It’s known in neuroscience that the neurons in question from the sympathetic nervous system control the body’s “flight or fight” response and communicate with connected tissues by releasing a chemical messenger called norepinephrine. The release of norepinephrine also plays an important role in the development and maturation of islets, said Kuruvilla.

Using sympathetic neurons and islet cells grown together in a culture dish, the researchers observed that islet cells move toward the nerves and identified norepinephrine as the nerve signal that causes the movement of the islet cells.

“Seeing how these islet cells were responding to sympathetic neurons both in a dish and the effects of removing the nerves in a whole animal on islet shape and functions were pretty remarkable,” said Borden, lead author of the paper. “It was clear to us that sympathetic neurons were key to how islets were developing, something no one else had shown.”

Kuruvilla said these studies, identifying sympathetic nerves as a critical player in organizing pancreatic cells during development and influencing their later function, could add to a better understanding of treating diabetes in the future. The research also lends support to the value in considering the importance of external factors such as nerves and blood vessels when transplanting islet cells for the treatment of diabetes in patients.

“This study reveals interactions between two co-developing systems, sympathetic neurons and pancreatic islet cells, that has important implications for peripheral organ development, and for regeneration of these tissues following injury or disease,” said Kuruvilla.

Imaging in mental health and improving the diagnostic process

What are some of the most troubling numbers in mental health? Six to 10 – the number of years it can take to properly diagnose a mental health condition. Dr. Elizabeth Osuch, a Researcher at Lawson Health Research Institute and a Psychiatrist at London Health Sciences Centre and the Department of Psychiatry at Western University, is helping to end misdiagnosis by looking for a ‘biomarker’ in the brain that will help diagnose and treat two commonly misdiagnosed disorders.

Major Depressive Disorder (MDD), otherwise known as Unipolar Disorder, and Bipolar Disorder (BD) are two common disorders. Currently, diagnosis is made by patient observation and verbal history. Mistakes are not uncommon, and patients can find themselves going from doctor to doctor receiving improper diagnoses and prescribed medications to little effect.

Dr. Osuch looked to identify a 'biomarker’ in the brain which could help optimize the diagnostic process. She examined youth who were diagnosed with either MDD or BD (15 patients in each group) and imaged their brains with an MRI to see if there was a region of the brain which corresponded with the bipolarity index (BI). The BI is a diagnostic tool which encompasses varying degrees of bipolar disorder, identifying symptoms and behavior in order to place a patient on the spectrum.

What she found was the activation of the putamen correlated positively with BD. This is the region of the brain that controls motor skills, and has a strong link to reinforcement and reward. This speaks directly to the symptoms of bipolar disorder. “The identification of the putamen in our positive correlation may indicate a potential trait marker for the symptoms of mania in bipolar disorder,” states Dr. Osuch.

In order to reach this conclusion, the study approached mental health research from a different angle. “The unique aspect of this research is that, instead of dividing the patients by psychiatric diagnoses of bipolar disorder and unipolar depression, we correlated their functional brain images with a measure of bipolarity which spans across a spectrum of diagnoses.” Dr. Osuch explains, “This approach can help to uncover a 'biomarker’ for bipolarity, independent of the current mood symptoms or mood state of the patient.”

Moving forward Dr. Osuch will repeat the study with more patients, seeking to prove that the activation of the putamen is the start of a trend in large numbers of patients. The hope is that one day there could be a definitive biological marker which could help differentiate the two disorders, leading to a faster diagnosis and optimal care.

In using a co-relative approach, a novel method in the field, Dr. Osuch uncovered results in patients that extend beyond verbal history and observation. These results may go on to change the way mental health is diagnosed, and subsequently treated, worldwide.

Post link

New research at Rutgers University may help shed light on how and why nervous system changes occur and what causes some people to suffer from life-threatening anxiety disorders while others are better able to cope.

Maureen Barr, a professor in the Department of Genetics, and a team of researchers, found that the architectural structure of the six sensory brain cells in the roundworm, responsible for receiving information, undergo major changes and become much more elaborate when the worm is put into a high stress environment.

Scientists have known for some time that changes in the tree-like dendrite structures that connect neurons in the human brain and enable our thought processes to work properly can occur under extreme stress, alter brain cell development and result in anxiety disorders like depression and Post Traumatic Stress Disorder affecting millions of Americans each year.

What scientists don’t understand for sure, Barr says, is the cause behind these molecular changes in the brain.

“This type of research provides us necessary clues that ultimately could lead to the development of drugs to help those suffering with severe anxiety disorders,” Barr says.

In the study published today in Current Biology,scientists at Rutgers have identified six sensory nerve cells in the tiny, transparent roundworm, known as the C. elegans and an enzyme called KPC-1/furin which triggers a chemical reaction in humans that is needed for essential life functions like blood-clotting.

While the enzyme also appears to play a role in the growth of tumors and the activation of several types of virus and diseases in humans, in the roundworm the enzyme enables its simple neurons to morph into new elaborately branched shapes when placed under adverse conditions.

Normally, this one-millimeter long worm develops from an embryo through four larval stages before molting into a reproductive adult. Put it under stressful conditions of overcrowding, starvation and high temperature and the worm transforms into an alternative larval stage known as the dauer that becomes so stress-resistant it can survive almost anything – including the Space Shuttle Columbia disaster in 2003 of which they were the only living things to survive.

“These worms that normally have a short life cycle turn into super worms when they go into the dauer stage and can live for months, although they are no longer able to reproduce,” Barr says.

What is so interesting to Barr is that when a perceived threat is over, these tiny creatures and their IL2 neurons transform back to a normal lifespan and reproductive state like nothing had ever happened. Under a microscope, the complicated looking tree-like connectors that receive information are pruned back and the worm appears as it did before the trauma occurred.

This type of neural reaction differs in humans who can suffer from extreme anxiety months or even years after the traumatic event even though they are no longer in a threatening situation.

The ultimate goal, Barr says, is to determine how and why the nervous system responds to stress. By identifying molecular pathways that regulate neuronal remodeling, scientists may apply this knowledge to develop future therapeutics.

When something gets in the way of our ability to see, we quickly pick up a new way to look, in much the same way that we would learn to ride a bike, according to a new study published in the Cell Press journal Current Biology on August 15.

Our eyes are constantly on the move, darting this way and that four to five times per second. Now researchers have found that the precise manner of those eye movements can change within a matter of hours. This discovery by researchers from the University of Southern California might suggest a way to help those with macular degeneration better cope with vision loss.

“The system that controls how the eyes move is far more malleable than the literature has suggested,” says Bosco Tjan of the University of Southern California. “We showed that people with normal vision can quickly adjust to a temporary occlusion of their foveal vision by adapting a consistent point in their peripheral vision as their new point of gaze.”

The fovea refers to the small, center-most portion of the retina, which is responsible for our high-resolution vision. We move our eyes to direct the fovea to different parts of a scene, constructing a picture of the world around us. In those with age-related macular degeneration, progressive loss of foveal vision leads to visual impairment and blindness.

In the new study, MiYoung Kwon, Anirvan Nandy, and Tjan simulated a loss of foveal vision in six normally sighted young adults by blocking part of a visual scene with a gray disc that followed the individuals’ eye gaze. Those individuals were then asked to complete demanding object-following and visual-search tasks. Within three hours of working on those tasks, people showed a remarkably fast and spontaneous adjustment of eye movements. Once developed, that change in their “point of gaze” was retained over a period of weeks and was reengaged whenever their foveal vision was blocked.

Tjan and his team say they were surprised by the rate of this adjustment. They note that patients with macular degeneration frequently do adapt their point of gaze, but in a process that takes months, not days or hours. They suggest that practice with a visible gray disc like the one used in the study might help speed that process of visual rehabilitation along. The discovery also reveals that the oculomotor (eye movement) system prefers control simplicity over optimality.

“Gaze control by the oculomotor system, although highly automatic, is malleable in the same sense that motor control of the limbs is malleable,” Tjan says. “This finding is potentially very good news for people who lose their foveal vision due to macular diseases. It may be possible to create the right conditions for the oculomotor system to quickly adjust,” Kwon adds.

Dragonflies can see by switching “on” and “off”

Researchers at the University of Adelaide have discovered a novel and complex visual circuit in a dragonfly’s brain that could one day help to improve vision systems for robots.

Dr Steven Wiederman and Associate Professor David O'Carroll from the University’s Centre for Neuroscience Research have been studying the underlying processes of insect vision and applying that knowledge in robotics and artificial vision systems.

Their latest discovery, published this month in The Journal of Neuroscience, is that the brains of dragonflies combine opposite pathways - both an ON and OFF switch - when processing information about simple dark objects.

“To perceive the edges of objects and changes in light or darkness, the brains of many animals, including insects, frogs, and even humans, use two independent pathways, known as ON and OFF channels,” says lead author Dr Steven Wiederman.

“Most animals will use a combination of ON switches with other ON switches in the brain, or OFF and OFF, depending on the circumstances. But what we show occurring in the dragonfly’s brain is the combination of both OFF and ON switches. This happens in response to simple dark objects, likely to represent potential prey to this aerial predator.

"Although we’ve found this new visual circuit in the dragonfly, it’s possible that many other animals could also have this circuit for perceiving various objects,” Dr Wiederman says.

The researchers were able to record their results directly from ‘target-selective’ neurons in dragonflies’ brains. They presented the dragonflies with moving lights that changed in intensity, as well as both light and dark targets.

“We discovered that the responses to the dark targets were much greater than we expected, and that the dragonfly’s ability to respond to a dark moving target is from the correlation of opposite contrast pathways: OFF with ON,” Dr Wiederman says.

“The exact mechanisms that occur in the brain for this to happen are of great interest in visual neurosciences generally, as well as for solving engineering applications in target detection and tracking. Understanding how visual systems work can have a range of outcomes, such as in the development of neural prosthetics and improvements in robot vision.

"A project is now underway at the University of Adelaide to translate much of the research we’ve conducted into a robot, to see if it can emulate the dragonfly’s vision and movement. This project is well underway and once complete, watching our autonomous dragonfly robot will be very exciting,” he says.

Post link

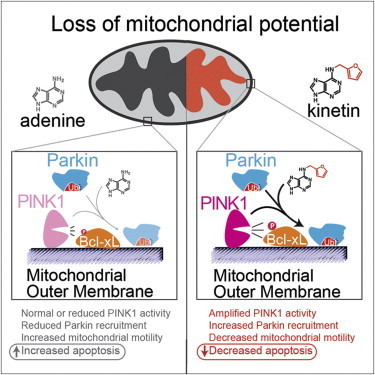

The active ingredient in an over-the-counter skin cream might do more than prevent wrinkles. Scientists have discovered that the drug, called kinetin, also slows or stops the effects of Parkinson’s disease on brain cells.

Scientists identified the link through biochemical and cellular studies, but the research team is now testing the drug in animal models of Parkinson’s. The research is published in the August 15, 2013 issue of the journal Cell.

“Kinetin is a great molecule to pursue because it’s already sold in drugstores as a topical anti-wrinkle cream,” says HHMI investigator Kevan Shokat of the University of California, San Francisco. “So it’s a drug we know has been in people and is safe.”

Parkinson’s disease is a degenerative disease that causes the death of neurons in the brain. Initially, the disease affects one’s movement and causes tremors, difficulty walking, and slurred speech. Later stages of the disease can cause dementia and broader health problems. In 2004, researchers studying an Italian family with a high prevalence of early-onset Parkinson’s disease discovered mutations in a protein called PINK1 associated with the inherited form of the disease.

Since then, studies have shown that PINK1 normally wedges into the membrane of damaged mitochondria inside cells that causes another protein, Parkin, to be recruited to the mitochondria, which are organelles responsible for energy generation. Neurons require high levels of energy production, therefore when mitochondrial damage occurs, it can lead to neuronal death. However, when Parkin is present on damaged mitochondria, studding the mitochondrial surface, the cell is able to survive the damage. In people who inherit mutations in PINK1, however, Parkin is never recruited to the organelles, leading to more frequent neuronal death than usual.

Shokat and his colleagues wanted to develop a way to turn on or crank up PINK1 activity, therefore preventing an excess of cell death, in those with inherited Parkinson’s disease. But turning on activity of a mutant enzyme is typically more difficult than blocking activity of an overactive version.

“When we started this project, we really thought that there would be no conceivable way to make something that directly turns on the enzyme,” says Shokat. “For any enzyme we know that causes a disease, we have ways to make inhibitors but no real ways to turn up activity.”

His team expected it would have to find a less direct way to mimic the activity of PINK1 and recruit Parkin. In the hopes of more fully understanding how PINK1 works, they began investigating how PINK1 binds to ATP, the energy molecule that normally turns it on. In one test, instead of adding ATP to the enzymes, they added different ATP analogues, versions of ATP with altered chemical groups that slightly change its shape. Scientists typically must engineer new versions of proteins to be able to accept these analogs, since they don’t fit into the typical ATP binding site. But to Shokat’s surprise, one of the analogs—kinetin triphosphate, or KTP—turned on the activity of not only normal PINK1, but also the mutated version, which doesn’t bind ATP.

“This drug does something that chemically we just never thought was possible,” says Shokat. “But it goes to show that if you find the right key for the right lock, you’ll be able to open the door.”

To test whether the binding of KTP to PINK1 led to the same consequences as the usual ATP binding, Shokat’s group measured the activity of PINK1 directly, as well as the downstream consequences of this activity, including the amount of Parkin recruited to the mitochondrial surface, and the levels of cell death. Adding the precursor of KTP, kinetin, to cells—both those with PINK1 mutations and those with normal physiology—amplified the activity of PINK1, increased the level of Parkin on damaged mitochondria, and decreased levels of neuron death, they found.

“What we have here is a case where the molecular target has been shown to be important to Parkinson’s in human genetic studies,” says Shokat. “And now we have a drug that specifically acts on this target and reverses the cellular causes of the disease.”

The similar results in cells with and without PINK1 mutations suggest that kinetin, which is a precursor to KTP, could be used to treat not only Parkinson’s patients with a known PINK1 mutation, but to slow progression of the disease in those without a family history by decreasing cell death.

Shokat is now performing experiments on the effects of kinetin in mice with various forms of Parkinson’s disease. However, the usefulness of animal models in Parkinson’s research has been debated, and therefore the positive results from the cellular data, he says, is as good an indicator as results in animals that this drug has potential to treat Parkinson’s in humans. Initial human studies will likely focus on the small population of patients with PINK1 mutations, and if successful in that group the drug could later be tested in a wider array of Parkinson’s patients.

A Genetic Answer to the Alzheimer’s Riddle?

What if we could pinpoint a hereditary cause for Alzheimer’s, and intervene to reduce the risk of the disease? We may be closer to that goal, thanks to a team at the University of Kentucky. Researchers affiliated with the UK Sanders-Brown Center on Aging have completed new work in Alzheimer’s genetics; the research is detailed in a paper published today in the Journal of Neuroscience.

Emerging evidence indicates that, much like in the case of high cholesterol, some Alzheimer’s disease risk is inherited while the remainder is environmental. Family and twin studies suggest that about 70 percent of total Alzheimer’s risk is hereditary.

Recently published studies identified several variations in DNA sequence that each modify Alzheimer’s risk. In their work, the UK researchers investigated how one of these sequence variations may act. They found that a “protective” genetic variation near a gene called CD33 correlated strongly with how the CD33 mRNA was assembled in the human brain. The authors found that a form of CD33 that lacked a critical functional domain correlates with reduced risk of Alzheimers disease. CD33 is thought to inhibit clearance of amyloid beta, a hallmark of Alzheimers disease.

The results obtained by the UK scientists indicate that inhibiting CD33 may reduce Alzheimer’s risk. A drug tested for acute myeloid leukemia targets CD33, suggesting the potential for treatments based on CD33 to mitigate the risk for Alzheimer’s disease. Additional studies must be conducted before this treatment approach could be tested in humans.

Post link

There is no evidence that impaired blood flow or blockage in the veins of the neck or head is involved in multiple sclerosis, says a McMaster University study.

The research, published online by PLOS ONE Wednesday, found no evidence of abnormalities in the internal jugular or vertebral veins or in the deep cerebral veins of any of 100 patients with multiple sclerosis (MS) compared with 100 people who had no history of any neurological condition.

The study contradicts a controversial theory that says that MS, a chronic, neurodegenerative and inflammatory disease of the central nervous system, is associated with abnormalities in the drainage of venous blood from the brain. In 2008 Italian researcher Paolo Zamboni said that angioplasty, a blockage clearing procedure, would help MS patients with a condition he called chronic cerebrospinal venous insufficiency (CCSVI). This caused a flood of public response in Canada and elsewhere, with many concerned individuals lobbying for support of the ‘Liberation Treatment’ to clear the veins, as advocated by Zamboni.

“This is the first Canadian study to provide compelling evidence against the involvement of CCSVI in MS,” said principal investigator Ian Rodger, a professor emeritus of medicine in the Michael G. DeGroote School of Medicine. “Our findings bring a much needed perspective to the debate surrounding venous angioplasty for MS patients".

In the study all participants received an ultrasound of deep cerebral veins and neck veins as well as a magnetic resonance imaging (MRI) of the neck veins and brain. Each participant had both examinations performed on the same day. The McMaster research team included a radiologist and two ultrasound technicians who had trained in the Zamboni technique at the Department of Vascular Surgery of the University of Ferrara.

Researchers from King’s College London and the University of Nottingham have identified neuroimaging markers in the brain which could help predict whether people with psychosis respond to antipsychotic medications or not.

In approximately half of young people experiencing their first episode of a psychosis (FEP), the symptoms do not improve considerably with the initial medication prescribed, increasing the risk of subsequent episodes and worse outcome. Identifying individuals at greatest risk of not responding to existing medications could help in the search for improved medications, and may eventually help clinicians personalize treatment plans.

In a study published today in JAMA Psychiatry, researchers used structural Magnetic Resonance Imaging (MRI) to scan the brains of 126 individuals – 80 presenting with FEP, and 46 healthy controls. Participants had an MRI scan shortly after their FEP, and another assessment 12 weeks later, to establish whether symptoms had improved following the first treatment with antipsychotic medications.

The researchers examined a particular feature of the brain called “cortical gyrification” - the extent of folding of the cerebral cortex and a marker of how it has developed. They found that the individuals who did not respond to treatment already had a significant reduction in gyrification across multiple brain regions, compared to patients who did respond and to individuals without psychosis. This reduced gyrification was particularly present in brain areas considered important in psychosis, such as the temporal and frontal lobes. Those who responded to treatment were virtually indistinguishable from the healthy controls.

The researchers also investigated whether the differences could be explained by the type of diagnosis of psychosis (eg. with or without affective symptoms, such as depression or elated mood). They found that reduced gyrification predicted non-response to treatment independently of the diagnosis.

Dr Paola Dazzan from the Department of Psychosis Studies at King’s College London’s Institute of Psychiatry, and senior author of the paper, says: “Our study provides crucial evidence of a neuroimaging marker that, if validated, could be used early in psychosis to help identify those people less likely to respond to medications. It is possible that the alterations we observed are due to differences in the way the brain has developed early on in people who do not respond to medication compared to those who do.”

She continues:”There have been few advances in developing novel anti-psychotic drugs over the past 50 years and we still face the same problems with a sub-group of people who do not respond to the drugs we currently use. We could envisage using a marker like this one to identify people who are least likely to respond to existing medications and focus our efforts on developing new medication specifically adapted to this group. In the longer term, if we were able to identify poor responders at the outset, we may be able to formulate personalized treatment plans for that individual patient.”

Dr Lena Palaniyappan from the University of Nottingham adds: “All of us have complex and varying patterns of folding in our brains. For the first time we are showing that the measurement of these variations could potentially guide us in treating psychosis. It is possible that people with specific patterns of brain structure respond better to treatments other than antipsychotics that are currently in use. Clearly, the time is ripe for us to focus on utilising neuroimaging to guide treatment decisions.”

Psychosis is a term used to indicate mental health disorders that present with symptoms like hallucinations (such as hearing voices) or delusions (unshakeable beliefs based on the person’s altered perception of reality, which may not correspond to the way others see the world). Psychotic episodes are present in conditions such as schizophrenia and bipolar disorder.

Approximately 1 in 100 people in England have at least one episode of psychosis throughout their lives. In most cases, psychosis develops during late adolescence (15 or above) or adulthood. Treatment involves a combination of antipsychotic medication, psychological therapies and social support. Many people with psychosis go on to lead ordinary lives and for about 60% of people, the symptoms disappear within 12 months from onset. However, for others, treatment is less straightforward and many do not respond to the initial antipsychotic treatment prescribed by their doctor. Early response to antipsychotic medication is known to be associated with better outcome and fewer subsequent episodes, and intervening early with effective treatments is therefore important.